Act locally, connect globally with IoT and edge computing

There are places so remote, so harsh that humans can't safely explore them (for example, hundreds of miles below the earth, areas that experience extreme temperatures, or on other planets). These places might have important data that could help us better understand earth and its history, as well as life on other planets. But they usually have little to no internet connection, making the challenge of exploring environments inhospitable for humans seem even more impossible.

How do we push the boundaries of what's possible?

The answer to this question is actually on your phone, your smartwatch, and billions of other places on earth—it's the Internet of Things (IoT). Connected devices allow us to extend our senses to remote locations, such as a robot carrying out work on Mars or monitoring remote oil wells.

This is the exciting future for IoT, and it's closer than you think. Already, IoT is delivering deep and precise insights to improve virtually every aspect of our lives. Here's a few examples:

- IoT sensors in a factory can monitor and predict equipment failure before an accident.

- Healthcare providers can provide remote monitoring of patient health—improving patient care.

- Security cameras can better protect people with real-time notifications.

Because these IoT devices are powered by microprocessors or microcontrollers that have limited processing power and memory, they often rely heavily on AWS and the cloud for processing, analytics, storage, and machine learning. But as the number of IoT devices and use cases grow, people are finding that managing these connected devices presents new challenges. Sometimes an internet connection is weak or not available at all, as is often the case in remote locations. For some applications, a trip to the cloud and back isn't possible because of latency requirements (for example, an autonomous car interpreting its environment in real time).

There's also the cost to send data to the cloud to consider. Some sensors, like those in factories, are collecting an incredible amount of data and sending it all to the cloud could get expensive. These barriers are driving some people to the edge—literally.

In this post, I want to talk about edge computing, the power to have compute resources and decision-making capabilities in disparate locations, often with intermittent or no connectivity to the cloud. In other words, process the data closer to where it's created.

Where are edge devices?

Increasingly, developers are discovering the benefits to doing some compute and analytics closer to the end user, and even right on devices. By moving data processing closer to the end user, you can reduce latency for critical applications. You can also help manage the massive deluge of data generated by the billions of devices, and deliver fast, intelligent, near real-time responsiveness.

Edge devices, like gateways or cameras, can act locally on the data they generate, while still using the cloud for management, analytics, durable storage, and more. Here are a few places you're likely to see edge technologies at work:

Edge devices are critical when it comes to accessing data located in remote locations. There may be little to no > > internet connection or safety may be an issue. Locations could be miles below the surface of the Earth in a mine or > an oil well, in the middle of the jungle, or even on another planet. In addition, robots with edge capabilities can > keep workers safer by testing and operating in inhospitable and dangerous locations.

For example, InSitu (a division of Boeing) uses edge technologies on drones that capture terabytes of high-resolution images over millions of hours in extremely remote areas like wildfires, strip mines, and desert wellheads.

Continuously monitoring the state of equipment in real time helps to identify potential breakdowns before they affect production. We commonly see predictive maintenance solutions deployed in industrial settings, such as factories and production facilities, resulting in an increase in equipment lifespan, improved worker safety, and supply chain optimization.

What's more, you can get rich insights at a lower cost by programming your device to filter data locally. Only transmit the data you need for your applications to the cloud. Edge devices can also streamline operations by automating repetitive tasks. For example, in Amazon fulfillment centers, robots assist human workers by helping sort and deliver packages.

Agriculture companies are improving crop health, yield, and nutrition while also lowering the cost of food with IoT-enabled greenhouses and farms. Edge devices recognize the main growth stages of plants and automatically adjust nutrition, water, and environmental conditions to maximize yields. They can also monitor soil conditions or record crop characteristics, such as yield, shell weight, and moisture during harvest. For example, Bayer Crop Science uses IoT to gain new business insights from agricultural data to help farmers improve the global food system.

Cars and trucks are increasingly gaining the ability to make sense of their environments and even navigate. For example, they can detect another vehicle, use cameras to monitor driver alertness, use voice control to manage car or home settings, or even drive autonomously. These types of advancements require local computing so vehicles and drivers can react in a split second.

To provide uninterrupted service, home monitoring devices need low latency and the ability to compute data locally to notify residents quickly of an intrusion or leak. Examples include connected door locks, video doorbells, security cameras, water leak detectors, and connected thermostats.

Inside the edge

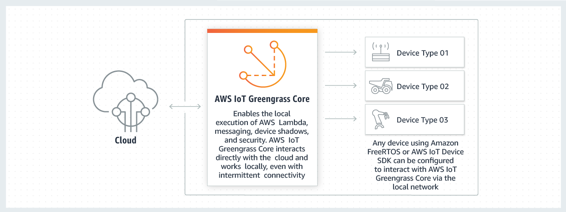

With edge computing, you can take some of the power and functionality that the cloud provides and extend it to edge devices. About two years ago, we launched AWS IoT Greengrass, software that lets you run local compute, messaging, and storage for connected devices in a secure way.

With AWS IoT Greengrass, connected devices can run AWS Lambda functions, execute predictions based on ML models, keep device data in sync, and communicate with other devices securely—even when not connected to the internet.

AWS IoT Greengrass runs on devices, such as edge gateways or cameras, that collect data, filter and analyze it before sending it to the cloud, and act in near real time. However, the majority of edge devices are smaller, low-power devices with sensors (such as light bulbs or appliances). AWS IoT Greengrass devices can connect to these smaller devices, collect data, and enable them to act locally as well.

These devices often run Amazon FreeRTOS, an open source operating system for microcontrollers. Amazon FreeRTOS makes small, low-power edge devices easy to program, deploy, secure, connect, and manage.

ML at the edge

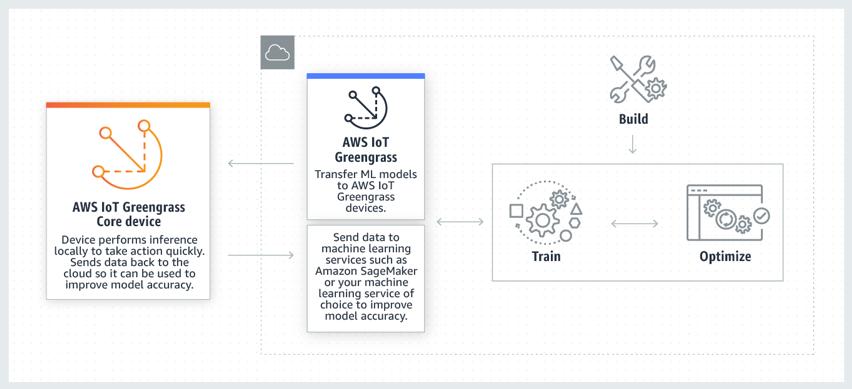

One of the most exciting advancements in IoT and edge computing is the ability to run ML inference locally on devices. ML uses algorithms that learn from existing data, a process called training, to make decisions about new data, a process called inference.

During training, patterns and relationships in the data are identified to build a model. The model allows a system to make intelligent decisions about data that it hasn't encountered before. Optimizing models compresses the model size so that it runs quickly.

Training and optimizing ML models require massive computing resources, so it is a natural fit for the cloud. Inference takes a lot less computing power, and is often done in real time when new data is available. Getting inference results with low latency is important for making sure that your IoT applications can respond quickly to local events.

For example, Vantage Power designs and manufactures powertrain electrification and connectivity technologies for heavy-duty vehicles. They use AWS IoT Greengrass ML Inference to derive insights and predictive analytics models that can then be distributed to the vehicles to take preventative action in real time. Getting ahead of equipment failure saves money and time, and increases safety.

Imagine the possibilities when you add edge ML capabilities to a robot that can sense, process, and act. Local inference allows the robot to make autonomous decisions in near-real time, even without a connection to the cloud.

Intelligent robots are being increasingly used in warehouses to distribute inventory, in homes to carry out tedious housework, and in retail stores to provide customer service. Robotics applications use ML to perform more complex tasks like recognizing an object or face, having a conversation with a person, following a spoken command, or navigating autonomously.

Last November, we announced AWS RoboMaker, a service that makes it easy to develop, test, and deploy intelligent robotics applications at scale. Because AWS RoboMaker is built on AWS IoT Greengrass, your robot can act locally on generated data, while still using the cloud for management, analytics, and durable storage. This also enables a fleet management service with a robot registry, security, and fault tolerance built-in. You can deploy, perform over-the-air (OTA) updates, and manage your robotics applications throughout the lifecycle of your robots.

The California Institute of Technology (CalTech) is using AWS RoboMaker in NASA's Jet Propulsion Laboratory (JPL) to accelerate the development of new functionality for space terrain rovers. For example, they are testing a robotic arm that can mimic the arm movements of a human.

Storage and compute without connectivity

The challenge of storing and processing data in an environment with little to no internet connection is one that many organizations face (for example, in deserts, ships, or drones). In situations like this, you need a physical storage device and computing device that is small enough to ship and able to handle the rigors of harsh environment.

Three years ago, we announced AWS Snowball Edge, an edge-computing device to support independent local workloads in remote locations, as well as for data migration. Snowball Edge supports specific Amazon Elastic Compute Cloud (Amazon EC2) instance types, as well as Lambda functions. You can develop and test in AWS, then deploy applications on devices in remote locations to collect, pre-process, and return the data.

For example, Oregon State University's Hatfield Marine Science Center used Snowball Edge to collect and analyze 100 TB of oceanic and coastal images in real time. They used onboard compute capabilities, then transferred the data to the cloud when ashore.

Looking ahead

Whether you're trying to discover and explore remote locations, save lives, improve production, or just trying to make the perfect flatbread—IoT and edge technologies help get you there.

At AWS, when we think about the future of hybrid, we believe that most workloads currently in data centers will be in the cloud and the on-premises infrastructure will be these billions of devices that sit on the edge. On-premises devices will be in our homes, offices, oil fields, space, planes, ships, and more.

This makes the cloud more important than ever: connected devices need a secure platform to aggregate and analyze all the data. This view from the top allows organization to make better decisions, improve end-user experiences, and uncover new opportunities.

If you're interested in learning more about IoT and edge computing, consider joining me at AWS re:Invent 2019 in Las Vegas, December 2 – 6.