Expanding the Cloud - Adding the Incredible Power of the Amazon EC2 Cluster GPU Instances

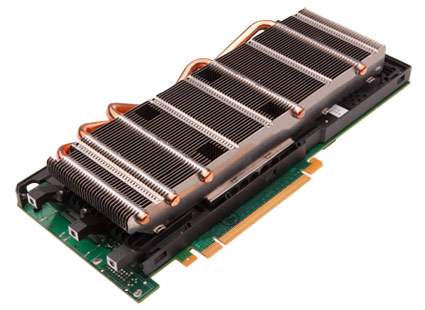

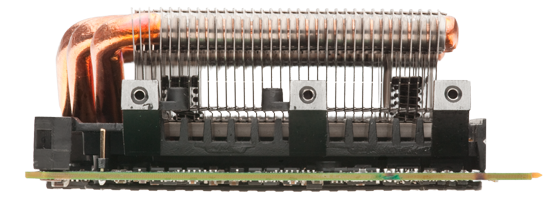

Today Amazon Web Services takes another step on the continuous innovation path by announcing a new Amazon EC2 instance type: The Cluster GPU Instance. Based on the Cluster Compute instance type, the Cluster GPU instance adds two NVIDIA Telsa M2050 GPUs offering GPU-based computational power of over one TeraFLOPS per instance. This incredible power is available for anyone to use in the usual pay-as-you-go model, removing the investment barrier that has kept many organizations from adopting GPUs for their workloads even though they knew there would be significant performance benefit.

From financial processing and traditional oil & gas exploration HPC applications to integrating complex 3D graphics into online and mobile applications, the applications of GPU processing appear to be limitless. We believe that making these GPU resources available for everyone to use at low cost will drive new innovation in the application of highly parallel programming models.

From CPU to GPU

Building general purpose architectures has always been hard; there are often so many conflicting requirements that you cannot derive an architecture that will serve all, so we have often ended up focusing on one side of the requirements that allow you to serve that area really well. For example, the most fundamental abstraction trade-off has always been latency versus throughput. These trade-offs have even impacted the way the lowest level building blocks in our computer architectures have been designed. Modern CPUs strongly favor lower latency of operations with clock cycles in the nanoseconds and we have built general purpose software architectures that can exploit these low latencies very well. Now that our ability to generate higher and higher clock rates has stalled and CPU architectural improvements have shifted focus towards multiple cores, we see that it is becoming harder to efficiently use these computer systems.

One trade-off area where our general purpose CPUs were not performing well was that of massive fine grain parallelism. Graphics processing is one such area with huge computational requirements, but where each of the tasks is relatively small and often a set of operations are performed on data in the form of a pipeline. The throughput of this pipeline is more important than the latency of the individual operations. Because of its focus on latency, the generic CPU yielded rather inefficient system for graphics processing. This lead to the birth of the Graphics Processing Unit (GPU) which was focused on providing a very fine grained parallel model, with processing organized in multiple stages, where the data would flow through. The model of a GPU is that of task parallelism describing the different stages in the pipeline, as well as data parallelism within each stage, resulting in a highly efficient, high throughput computation architecture.

The early GPU systems were very vendor specific and mostly consisted of graphic operators implemented in hardware being able to operate on data streams in parallel. This yielded a whole new generation of computer architectures where suddenly relatively simple workstations could be used for very complex graphics tasks such as Computer Aided Design. However these fixed functions for vertex and fragment operations eventually became too restrictive for the evolution of next generation graphics, so new GPU architectures were developed where user specific programs could be run in each of the stages of the pipeline. As each of these programs was becoming more complex and demand for new operations such as geometric processing increased, the GPU architecture evolved into one long feed-forward pipeline consisting of generic 32-bit processing units handling both task and data parallelism. The different stages were then load balanced across the available units.

General Purpose GPU programming

Programming the GPU evolved in a similar fashion; it started with the early APIs being mainly pass-through to the operations programmed in hardware. The second generation APIs to GPU systems were still graphics-oriented but under the covers implemented dynamic assignments of dedicated tasks over the generic pipeline. A third generation of APIs, however, left the graphics specifics interfaces behind and instead focused on exposing the pipeline as a generic highly parallel engine supporting task and data parallelism.

Already with the second generation APIs researchers and engineers had started to use the GPU for general purpose computing as the generic processing units of the modern GPU were extremely well suited to any system that could be decomposed into fine grain parallel tasks. But with the third generation interfaces, the true power of General Purpose GPU programming was unlocked. In the taxonomy of traditional parallelism, the programming of the pipeline is a combination of SIMD (single instruction, multiple data) inside a stage and SPMD (single program, multiple data) for how results get routed between stages. A programmer will write a series of threads each defining the individual SIMD tasks and then an SPMD programs to execute those threads and collect and store/combine the results of those operations. The input data is often organized as a Grid.

NVIDIA's CUDA SDK provides a higher level interface with extensions in the C language that supports both multi-threading and data parallelism. The developer writes single c functions dubbed a "kernel" that operate on data and are executed by multiple threads according to an execution configuration. To easily facilitate different input models, threads can be organized into thread-blocks that are hierarchies for one-, two- and three-dimensional processors of vectors, matrices and volumes. Memories are organized into global memory, per-thread-block memory and per-thread private memory.

This combination of very basic primitives drives a whole range of different programming styles: map & reduce, scatter & gather & sort, as well as stream filtering and stream scanning. All running at extreme throughputs as high-end GPUs such as those supporting the Tesla "Fermi" CUDA architecture have close to 500 cores generating well over 500 GigaFLOPS per GPU.

The NVIDIA "Fermi" architecture as implemented in the NVIDIA Tesla 20-series GPUs (where we are providing instances with Tesla M2050 GPUs) are a major step up from the earlier GPUs as they provide high performance double precision floating point operations (64FP) and ECC GDDR5 memory.

The Amazon EC2 Cluster GPU instance

Last week it was revealed that the world's fastest supercomputer is now the Tianhe-1A with a peak performance of 4.701 PetaFLOPS. The Tianhe-1A runs on 14,336 Xeon X5670 processors and 7,168 Nvidia Tesla M2050 general purpose GPUs. Each node in the system consists of two Xeon processors and one GPU.

The EC2 Cluster GPU instance provides even more power in each instance: the two Xeon X5570 processors are combined with two NVIDIA Tesla M2050 GPUs. This gives you more than a TeraFLOPS processing power per instance. By default we allow any customer to instantiate clusters of up to 8 instances making the incredible power of an 8 TeraFLOPS available for anyone to use. This instance limit is a default usage limit, not a technology limit. If you need larger clusters we can make those available on request via the Amazon EC2 instance request form. If you are willing to switch to single precision floating the Tesla M2050 will even give you a TeraFLOP performance per GPU, doubling the overall performance.

We have already seen early customers out of the life sciences, financial, oil & gas, movie studios and graphics industries becoming very excited about the power these instances give them. Although everyone in the industry has known for years that General Purpose GPU processing is a direction with amazing potential, making major investments has been seen as high-risk given how fast moving the technology and programming was.

Cluster GPU programming in the Cloud with the Amazon Web Services changes of all of that. The power of world's most advanced GPUs is now available for everyone to use without any up-front investment, removing the risks and uncertainties that owning your own GPU infrastructure would involve. We have already seen with the EC2 Cluster Compute instances that "traditional" HPC has been unlocked for everyone to use, but Cluster GPU instances take this one step further making innovative resources that were even outside the reach of most professionals now available for everyone to use at very low cost. An 8 TeraFLOPS HPC cluster of GPU-enabled nodes will now only cost you about $17 per hour.

CPU and/or GPU

As exciting as it is to make GPU programming available for everyone to use, unlocking its amazing potential, it certainly doesn't mean that this is the start of the end of CPU based High Performance Computing. Both GPU and CPU architectures have their sweet spots and although I believe we will see a shift in the direction of GPU programming, CPU based HPC will remain very important.

GPUs work best on problem sets that are ideally solved using massive fine-grained parallelism, using for example at least 5,000 - 10,000 threads. To be able build applications that exploit this level of parallelism one needs to enter a very specific mindset of kernels, kernel functions, threads-blocks, grids of threads-blocks, mapping to hierarchical memory, etc. Configuring kernel execution is not a trivial exercise and requires GPU device specific knowledge. There are a number of techniques that every programmer has grown up with, such as branching, that are not available, or should be avoided on GPUs if one wants to truly exploit its power.

HPC programming for CPUs is very convenient compared to GPU programming as the power of traditional serial programming can be combined with that of using multiple powerful processors. Although efficient parallel programming on CPUs absolutely also requires a certain level of expertise its models and capabilities are closer to that of traditional programming. Where kernel functions on GPUs are best written as simple data operations combined with specific math operations, CPU based HPC programming can take on any level of complexity without any of the restrictions of for example the GPU memory models. Applications, libraries and the tools for CPU programming are plentiful and very mature, giving developers a wide range of options and programming paradigms.

One area where I expect progress will be made with the availability of the Cluster GPU instances is a combination of both HPC programming models which combines the power of CPUs and GPUs, as after all the Cluster GPU instances are based on the Cluster Compute Instances with their powerful quad core i7 processors.

Some good insight into the work that is needed to convert certain algorithms to run efficiently on GPUs is the UCB/NVIDIA "Designing Efficient Sorting Algorithms for Manycore GPUs" paper.

Cluster Computer, Cluster GPU and Amazon EMR

Amazon Elastic MapReduce (EMR) makes it very easy to run Hadoop's (MapReduce) massively parallel processing tasks. Amazon EMR will handle workload parallelization, node configuration and scaling, and cluster management, such that our customers can focus on writing the actual HPC programs.

Starting today Amazon EMR can take advantage of the Cluster Compute and Cluster GPU instances, giving customers ever more powerful components to base the large scale data processing and analysis on. These programs that rely on significant network I/O will also benefit from the low latency, full bisection bandwidth 10Gbps Ethernet network between the instances in the clusters.

Where to go from here?

For more information on the new Cluster GPU Instances for Amazon EC2 visit the High Performance Computing with Amazon EC2 page. For more information on using the HPC Cluster instances with Amazon Elastic MapReduce see the Amazon EMR detail page. Also more details can be found on the AWS Developer blog. James Hamilton has some interestign insights on GPGPU.